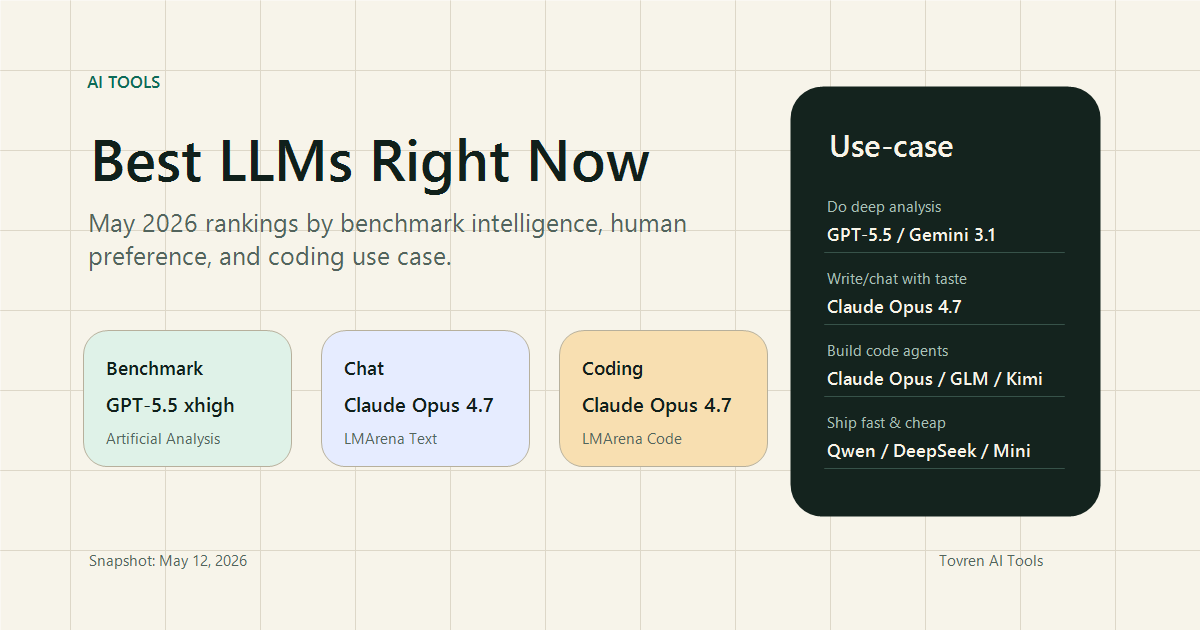

Snapshot date: May 12, 2026. The current LLM ranking is not a single ladder. If you only look at one leaderboard, you can easily pick the wrong model for your actual work. The best model for benchmark-heavy analysis is not always the best model for writing, coding, speed, cost, or long-context workflows.

This guide combines three useful signals: Artificial Analysis for benchmark-oriented intelligence, LMArena Text for human preference across real prompts, and LMArena Code for coding preference. The short version: GPT-5.5 leads the benchmark-intelligence table, Claude Opus dominates the current arena-style preference rankings, and Gemini/Kimi/Qwen/DeepSeek-class models matter when price, speed, or deployment constraints are part of the decision.

Quick Ranking Summary

- Best benchmark-intelligence pick: GPT-5.5, especially xhigh/high reasoning modes.

- Best human-preference pick: Claude Opus 4.7 thinking and Claude Opus 4.6 thinking sit at the top of the current LMArena text ranking.

- Best coding-preference pick: Claude Opus 4.7 thinking leads the LMArena Code view, with GLM-5.1 and Kimi K2.6 also appearing in the top tier.

- Best value candidates: Kimi K2.6, MiMo-V2.5-Pro, DeepSeek V4 variants, Qwen3.6, and smaller fast models deserve testing when cost matters.

- Most important warning: do not migrate production workflows from a leaderboard alone. Re-test with your own prompts, files, tools, and failure cases.

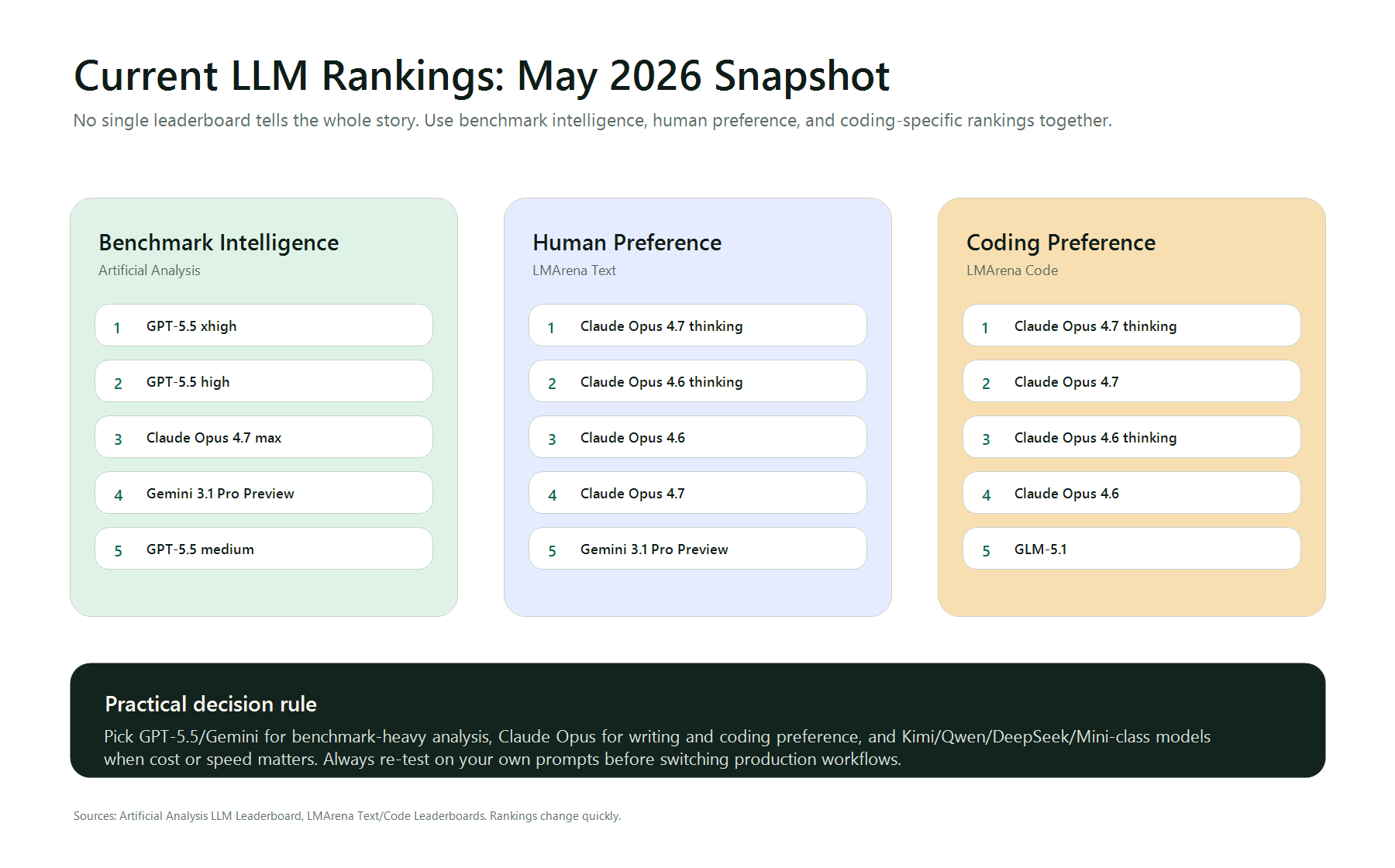

Overall Benchmark Intelligence

Artificial Analysis currently summarizes more than 100 LLMs across intelligence, price, speed, latency, context window, and related metrics. Its page states that GPT-5.5 xhigh and GPT-5.5 high are the highest intelligence models, followed by Claude Opus 4.7 max and Gemini 3.1 Pro Preview. The table below is the practical shortlist.

| Rank | Model | Provider | AA Intelligence Index | Context | Blended price | When to choose it |

|---|---|---|---|---|---|---|

| 1 | GPT-5.5 xhigh | OpenAI | 60 | 922k | .25 / 1M tokens | Hard analysis, research synthesis, complex reasoning. |

| 2 | GPT-5.5 high | OpenAI | 59 | 922k | .25 / 1M tokens | High-quality reasoning with less delay than xhigh. |

| 3 | Claude Opus 4.7 max | Anthropic | 57 | 1M | .94 / 1M tokens | Writing, review, coding, and nuanced judgment. |

| 4 | Gemini 3.1 Pro Preview | 57 | 1M | .50 / 1M tokens | Long-context analysis and strong price-performance. | |

| 5 | GPT-5.5 medium | OpenAI | 57 | 922k | .25 / 1M tokens | Balanced reasoning when xhigh is too slow. |

Human Preference Ranking

LMArena is useful because it captures model preference from pairwise battles rather than only benchmark scores. In the current Text Arena overview, Claude Opus models occupy the top positions. That does not mean Claude is always mathematically strongest, but it does mean users and judges tend to prefer its answers across many real prompts.

| Text Arena Rank | Model | What it suggests |

|---|---|---|

| 1 | Claude Opus 4.7 thinking | Best current preference signal for general chat, writing, and complex responses. |

| 2 | Claude Opus 4.6 thinking | Still extremely strong for nuanced work and long-form output. |

| 3 | Claude Opus 4.6 | Strong non-thinking option where speed matters more. |

| 4 | Claude Opus 4.7 | High-quality default for writing and review work. |

| 5 | Gemini 3.1 Pro Preview | Strong broad model with long-context appeal. |

Coding Ranking

The LMArena Code view is especially useful for developer workflows because it separates coding preference from general chat preference. The current top tier is Claude-heavy, but GLM-5.1 and Kimi K2.6 also appear near the top, which makes them worth testing if you care about cost, availability, or non-US model diversity.

| Code Arena Rank | Model | Score | Best use |

|---|---|---|---|

| 1 | Claude Opus 4.7 thinking | 1571 | Complex debugging, architecture review, agentic coding. |

| 2 | Claude Opus 4.7 | 1565 | High-quality coding without always using the heaviest thinking mode. |

| 3 | Claude Opus 4.6 thinking | 1551 | Large refactors and code reasoning. |

| 4 | Claude Opus 4.6 | 1548 | General coding assistance and code review. |

| 5 | GLM-5.1 | 1534 | Alternative coding model to test for price and availability. |

| 6 | Kimi K2.6 | 1529 | Competitive coding and long-context tasks. |

Best Model by Use Case

| Use case | Start with | Also test | Why |

|---|---|---|---|

| Deep research and synthesis | GPT-5.5 xhigh/high | Gemini 3.1 Pro Preview, Claude Opus 4.7 | Strong benchmark signal plus long-context options. |

| Writing, strategy, editorial work | Claude Opus 4.7 thinking | Claude Opus 4.6, Gemini 3.1 Pro | Arena preference strongly favors Claude at the top. |

| Software engineering | Claude Opus 4.7 thinking | GLM-5.1, Kimi K2.6, GPT-5.3 Codex | Code Arena favors Claude, while alternatives may win on cost or stack fit. |

| Long-context document analysis | Gemini 3.1 Pro Preview | Claude Opus 4.7, GPT-5.5 | 1M-context models are useful for big files and multi-document review. |

| High-volume automation | DeepSeek, Qwen, Kimi, MiniMax-class models | GPT mini/nano models | Cost and latency often matter more than absolute top score. |

| Fast user-facing chat | Gemini Flash, Qwen small models, GPT mini/nano | Provider-specific fast endpoints | Speed and consistency are usually more important than peak reasoning. |

How To Choose Without Getting Fooled

Leaderboards are helpful, but they are not neutral truth machines. A recent arXiv paper analyzing LMArena argues that rankings can vary across prompt slices and that preference data may blur what exactly is being measured. LiveBench also exists partly because static benchmarks can become contaminated as models and training data evolve.

Use this workflow before switching models:

- Pick 20 real prompts from your own work, not benchmark-style examples.

- Include five failure cases: vague instructions, missing context, bad documents, tool errors, and adversarial user requests.

- Run the same prompts through two frontier models and one lower-cost model.

- Score final usefulness, factuality, instruction-following, speed, and cost.

- Keep the cheaper model if it reaches 90% of the quality you need.

Bottom Line

If you want the current safest default for maximum intelligence, start with GPT-5.5 high or xhigh. If you want the strongest human-preference signal for writing and coding, start with Claude Opus 4.7 thinking. If you are building real products, do not stop there: test Gemini, Kimi, Qwen, DeepSeek, GLM, and smaller fast models against your own tasks. In 2026, the best LLM is not the one with the prettiest rank. It is the one that gives you the best result per dollar, per second, and per failure mode.